On version 13, I have experienced a -ton- of problems with SQL Locks, Deadlocks, Timeouts. I’ve probably spent a few hundred hours working on these topics.

This is extremely difficult work. I will try to offer some advice, but it’s hard to explain without writing a lot more.

Problem #1 - Missing/Bad Indexes

ERPNext is not fully optimized for performance. Sometimes, SQL indexes are missing that should exist. Other times, the Python code is doing inefficient or incorrect logic.

When Locks/Deadlocks/Timeouts happen, try to capture the related SQL queries.

- Configure your MariaDB server to use its Slow Query Log.

- Try to see what’s happening in realtime, using SQL statements like

SHOW FULL PROCESSLIST;

If you can find the queries causing the problems, you can take action. Such as optimizing indexes, or improving the Python code. The SQL command 'EXPLAIN' can help you determine if a query needs index optimization.

Problem #2 - Concurrency can produce Deadlocks

Many ERPNext tasks involve a lot of SQL tables. For example, Submitting a 'Delivery Note or ‘Sales Invoice’ can interact with SQL tables (DocTypes) like:

- Item

- Customer

- Sales Order and Sales Order Item

- Delivery Note and Delivery Note Item

- Sales Invoice and Sales Invoice Item

- Sales Taxes and Charges

- GL Entry

- Stock Ledger Entry (this table is especially challenging)

If you are Submitting these documents sequentially? Normally that is okay. But what happens if you try to post multiple documents concurrently? (for example, by using background Workers)

What can sometimes happen is a Deadlock. Two or more processes/threads trying to CRUD the same SQL records, at the same time. Neither can complete until the other releases locks. So they deadlock.

Solving this is extremely challenging. It took me a long while to gain control over this:

- In many cases I tried to improve the performance by editing Python, SQL, or improving indexes. The faster the process, the less time SQL objects are locked.

- If my task did not need a full rollback? Then I would

frappe.db.commit() where possible, to remove the locks earlier.

- I introduced my own “Safety Locking” mechanism:

By manually locking at the beginning, I force all other concurrent transactions to “wait” for locks to release. For example, Invoice #2 may have to wait a few seconds until Invoice #1 releases some locks.

Yes, this reduces concurrency (not ideal). But helps prevent deadlocks (really great). Ever since I introduced this concept into my code, Deadlocks are very, very rare in my environments.

Problem #3 - MariaDB doesn’t handle Locks gracefully

When MariaDB encounters long-running queries, or locks, the database tables can easily get “stuck” in 2 different states:

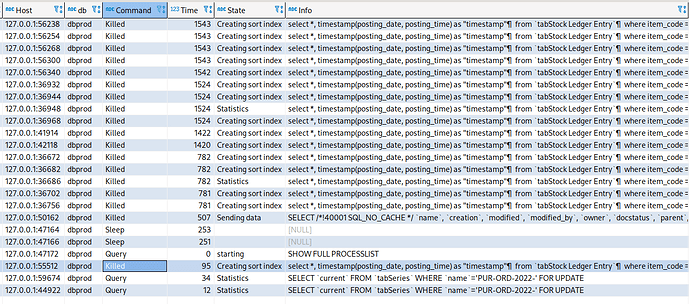

- “Creating sort index”

- “Sending data”

See screenshot below (from SQL command 'SHOW FULL PROCESSLIST')

Sometimes, MariaDB will remain “stuck” in these states literally forever. The queries will never time out. Even if you use SQL to “KILL” them, they will not disappear. Your database tables freeze. You cannot interact with them anymore. You must manually end the MariaDB process at the OS level.

It’s awful when this happens.  I’ve spend a ton of time reading about these 2 states. So far, I’ve found no way of teaching MariaDB to gracefully end them. Nothing I’ve tried makes MariaDB unfreeze.

I’ve spend a ton of time reading about these 2 states. So far, I’ve found no way of teaching MariaDB to gracefully end them. Nothing I’ve tried makes MariaDB unfreeze.

After dealing with this for 2+ years, my only plan is to get rid of MariaDB. And start using PostgreSQL instead. I’ve worked with other database software for over a decade (e.g. MS SQL). I’ve never had as many problems then, as I do today with MariaDB.

Problem #4 - ERPNext Design Decisions

Some of the ERPNext designs and architecture are adding to the problem. Things like:

- The way ‘Stock Ledger Entry’ and ‘GL Entry’ were designed.

- The number of hits that SQL table

tabSeries experiencing to issue new Naming Series values.

- Child and Parent table architecture.

- A few bugs in Frappe Framework around Document deletions.

- Certain stock and ledger code doing a lot of Looping.

- SQL queries executing without the User’s consent/intent (many Reports)

Finally, sometimes MariaDB also just chooses the wrong index, such as when users perform List Page filters.

There are no quick fixes to the above. To fully solve these problems, you’d have to fork your ERPNext, and begin maintaining your own version with your own custom solutions.

Summary

Hopefully some of this information was helpful. If nothing else, know this: You Are Not Alone. These problems are happening for other people too. The larger your database? The more transactions you try to process each day? The more-likely these problems will appear.