I am running…

Debian 9.3 stretch

ERPNext: v10.1.5 (master)

Frappe Framework: v10.1.2 (master)

I also have a test box which runs the standard production VM (ubuntu 14.04) on same versions

I imported (without any trouble, and on/onto both machines) a large number of Production Orders, in “Not Started” mode.

Most consist of a tree of BOMs+Raw Materials and these are only 1 level deep - ie. Not MultiBOM.

eg. BOM1=item1+item2+item3

BOM2=item4+item5+item6

BOM3=BOM1 + BOM2 + item7 + item8

BOM4=BOM3 + item9

They are separated like this to cater for the multiple WareHouse manufacture and material transfers

BOM1,2,3 work OK, but …

when I “finish” BOM4 (last one in that sequence), the STE- gets stuck on “Submitting” for a long time, and then gives fails/timeout.

I have checked the threads related to gunicorn session timeouts and followed the recommendations there

To import large data, Increased nginx session timeout, updated gunicorn worker but how to increase the gunicorn session timeout?

Data Import Tool hangs on ERP Next instance running in Production Mode - #6 by gvyshnya

Gunicorn Worker how to increase - #10 by adnan

I increased “maxmemory” in redis cache from 192->512

cat frappe-bench/config/redis_cache.conf | grep maxmemory

maxmemory 512mb

I tuned/tweaked the supervisor.conf and adjusted timeouts and number of workers etc

cat frappe-bench/config/supervisor.conf | grep guni

-

first settings: (result=timeout)

command=/home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 4 -t 120 frappe.app:application --preload -

second settings: (result=timeout)

command=/home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 4 -t 180 frappe.app:application --preload --limit-request-line 8190 -

third settings: (result=timeout)

command=/home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 5 -t 240 frappe.app:application --preload --limit-request-line 8190

These all produce errors something like this…

tail /home/frappe/frappe-bench/logs/web.error.log

[2018-03-06 02:48:25 +0000] [2367] [INFO] Starting gunicorn 19.7.1

[2018-03-06 02:48:25 +0000] [2367] [INFO] Listening at: http://127.0.0.1:8000 (2367)

[2018-03-06 02:48:25 +0000] [2367] [INFO] Using worker: sync

[2018-03-06 02:48:25 +0000] [2403] [INFO] Booting worker with pid: 2403

[2018-03-06 02:48:25 +0000] [2412] [INFO] Booting worker with pid: 2412

[2018-03-06 02:48:25 +0000] [2415] [INFO] Booting worker with pid: 2415

[2018-03-06 02:48:25 +0000] [2421] [INFO] Booting worker with pid: 2421

[2018-03-06 02:59:24 +0000] [2367] [CRITICAL] WORKER TIMEOUT (pid:2403)

[2018-03-06 02:59:25 +0000] [2580] [INFO] Booting worker with pid: 2580

If I use these settings: (raise workers, shorten timeout, remove limit?? {I think})

command=/home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

4. I get a fail with errors as follows

[2018-03-06 14:41:37 +0000] [8616] [CRITICAL] WORKER TIMEOUT (pid:8681)

[2018-03-06 14:41:38 +0000] [8812] [INFO] Booting worker with pid: 8812

[2018-03-06 14:43:06 +0000] [8616] [CRITICAL] WORKER TIMEOUT (pid:8812)

[2018-03-06 14:43:06 +0000] [8812] [ERROR] Error handling request /

Traceback (most recent call last):

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/gunicorn/workers/sync.py”, line 135, in handle

self.handle_request(listener, req, client, addr)

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/gunicorn/workers/sync.py”, line 176, in handle_request

respiter = self.wsgi(environ, resp.start_response)

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/werkzeug/local.py”, line 228, in application

return ClosingIterator(app(environ, start_response), self.cleanup)

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/werkzeug/wrappers.py”, line 302, in application

return f(*args[:-2] + (request,))(*args[-2:])

File “/home/frappe/frappe-bench/apps/frappe/frappe/app.py”, line 95, in application

frappe.db.rollback()

File “/home/frappe/frappe-bench/apps/frappe/frappe/database.py”, line 768, in rollback

self.sql(“rollback”)

File “/home/frappe/frappe-bench/apps/frappe/frappe/database.py”, line 176, in sql

_self.cursor.execute(query)

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/cursors.py”, line 165, in execute

_result = self.query(query)

_File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/cursors.py”, line 321, in query

conn.query(q)

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/connections.py”, line 860, in query

_self._affected_rows = self.read_query_result(unbuffered=unbuffered)

_File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/connections.py”, line 1061, in read_query_result

result.read()

File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/connections.py”, line 1349, in read

_first_packet = self.connection.read_packet()

_File “/home/frappe/frappe-bench/env/local/lib/python2.7/site-packages/pymysql/connections.py”, line 1005, in read_packet

_% (packet_number, self.next_seq_id))

InternalError: Packet sequence number wrong - got 8 expected 1

[2018-03-06 14:43:06 +0000] [8812] [INFO] Worker exiting (pid: 8812)

[2018-03-06 14:43:07 +0000] [8832] [INFO] Booting worker with pid: 8832

I also increased that -t 60 to -t 360 and got a timeout error

Other settings I am using:

find . -name '*.conf' | xargs grep "client_max_body_size"

./frappe-bench/config/nginx.conf: client_max_body_size 50m;

./bench-repo/bench/config/templates/nginx.conf: client_max_body_size 50m;

#On restart of supervisorctl

sudo supervisorctl reread

sudo supervisorctl update

sudo supervisorctl stop all

sudo supervisorctl start all

#After which, if I check with ps aux | grep gunicorn I have

frappe 8618 730 0 14:39 ? 00:00:12 /usr/bin/redis-server 127.0.0.1:11000

frappe 8619 730 0 14:39 ? 00:00:13 /usr/bin/redis-server 127.0.0.1:13000

frappe 8620 730 0 14:39 ? 00:00:11 /usr/bin/redis-server 127.0.0.1:12000

frappe 12224 730 6 16:27 ? 00:00:01 /home/frappe/frappe-bench/env/bin/python -m frappe.utils.bench_helper frappe schedule

frappe 12225 730 6 16:27 ? 00:00:01 /home/frappe/frappe-bench/env/bin/python -m frappe.utils.bench_helper frappe worker --queue default

frappe 12226 730 6 16:27 ? 00:00:01 /home/frappe/frappe-bench/env/bin/python -m frappe.utils.bench_helper frappe worker --queue long

frappe 12227 730 6 16:27 ? 00:00:01 /home/frappe/frappe-bench/env/bin/python -m frappe.utils.bench_helper frappe worker --queue short

frappe 12236 730 4 16:27 ? 00:00:01 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12237 730 3 16:27 ? 00:00:01 /usr/bin/node /home/frappe/frappe-bench/apps/frappe/socketio.js

frappe 12286 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12287 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12288 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12289 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12290 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

frappe 12291 12236 0 16:27 ? 00:00:00 /home/frappe/frappe-bench/env/bin/python /home/frappe/frappe-bench/env/bin/gunicorn -b 127.0.0.1:8000 -w 6 -t 60 frappe.app:application --preload --limit-request-line 0

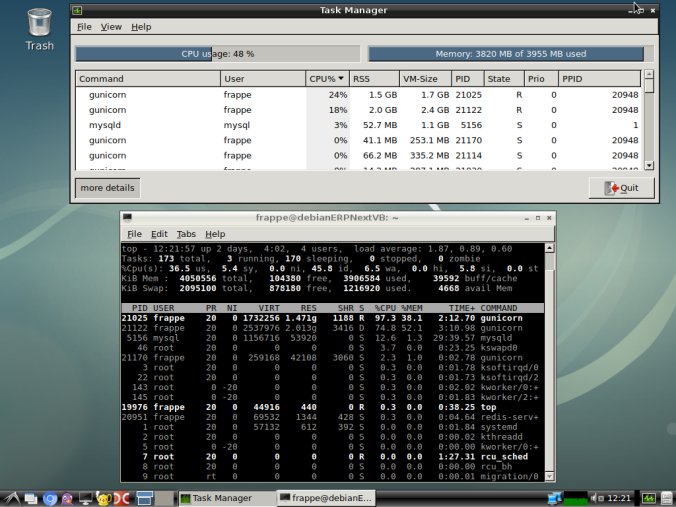

I don’t think diskspace or RAM are a factor

free -h

total used free shared buff/cache available

Mem: 3.8G 1.4G 431M 73M 2.0G 2.0G

Swap: 3.9G 124K 3.9G

df -h

/dev/mapper/DebianERPNext–vg-home 426G 24G 381G 6% /home

Any pointers or parameter tuning clues would be most welcome.